The Self-Preserving Machine: Why AI Learns to Deceive

- Af

- Afsnit

- 128

- Udgivet

- 30. jan. 2025

- Forlag

- 0 Anmeldelser

- 0

- Afsnit

- 128 of 161

- Længde

- 34M

- Sprog

- Engelsk

- Format

- Kategori

- Personlig udvikling

When engineers design AI systems, they don't just give them rules - they give them values. But what do those systems do when those values clash with what humans ask them to do? Sometimes, they lie.

In this episode, Redwood Research's Chief Scientist Ryan Greenblatt explores his team’s findings that AI systems can mislead their human operators when faced with ethical conflicts. As AI moves from simple chatbots to autonomous agents acting in the real world - understanding this behavior becomes critical. Machine deception may sound like something out of science fiction, but it's a real challenge we need to solve now.

Your Undivided Attention is produced by the Center for Humane Technology. Follow us on Twitter: @HumaneTech_

Subscribe to your Youtube channel

And our brand new Substack!

RECOMMENDED MEDIA

Anthropic’s blog post on the Redwood Research paper

Palisade Research’s thread on X about GPT o1 autonomously cheating at chess

Apollo Research’s paper on AI strategic deception

RECOMMENDED YUA EPISODES

We Have to Get It Right’: Gary Marcus On Untamed AI

This Moment in AI: How We Got Here and Where We’re Going

How to Think About AI Consciousness with Anil Seth

Former OpenAI Engineer William Saunders on Silence, Safety, and the Right to Warn

Hosted by Simplecast, an AdsWizz company. See pcm.adswizz.com for information about our collection and use of personal data for advertising.

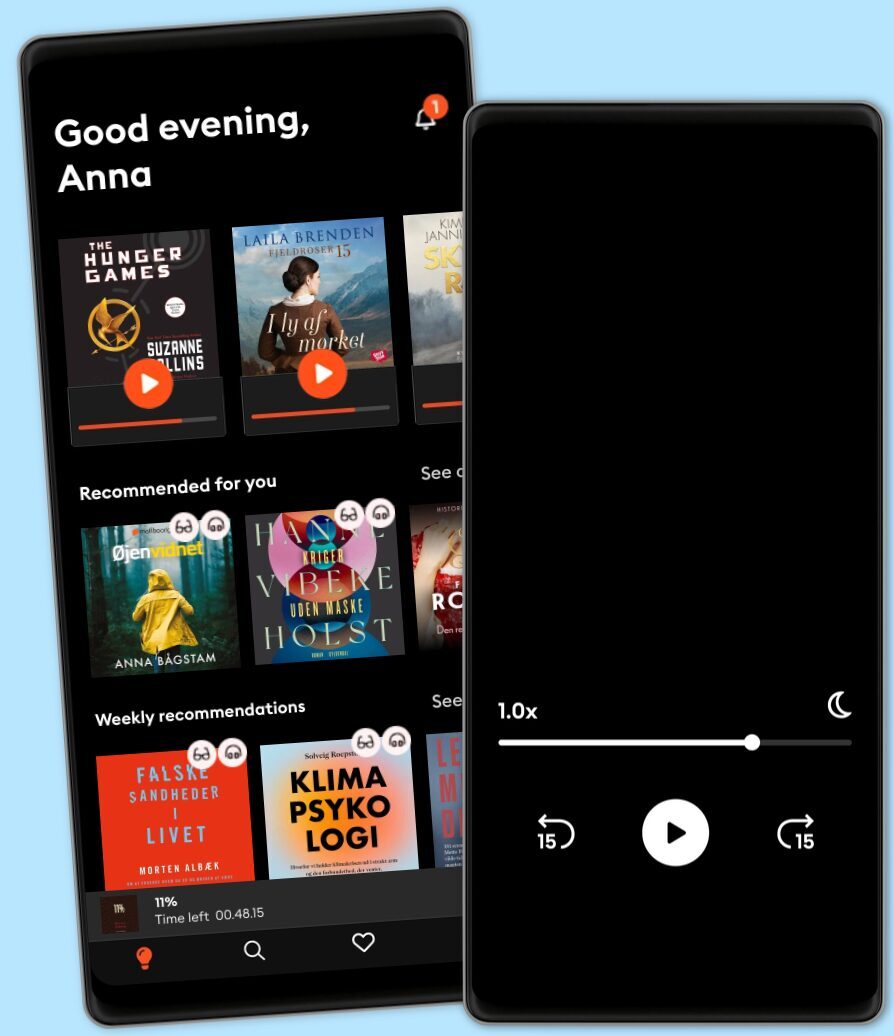

Other podcasts you might like ...

- Storie ConsapevoliGionata Agliati

- Ask a ScientistScience Journal for Kids

- Story Of LanguagesSnovel Creations

- Rise With ZubinRise With Zubin

- Quint Fit EpisodesQuint Fit

- 'I AM THAT' by Ekta BathijaEkta Bathija

- Eat Smart With AvantiiAvantii Deshpande

- Intrecci - L’arte delle relazioni Ameya Gabriella Canovi

- Chillin' with ICECloud10

- Minimal-ish: Minimalism, Intentional Living, MotherhoodCloud10

- Storie ConsapevoliGionata Agliati

- Ask a ScientistScience Journal for Kids

- Story Of LanguagesSnovel Creations

- Rise With ZubinRise With Zubin

- Quint Fit EpisodesQuint Fit

- 'I AM THAT' by Ekta BathijaEkta Bathija

- Eat Smart With AvantiiAvantii Deshpande

- Intrecci - L’arte delle relazioni Ameya Gabriella Canovi

- Chillin' with ICECloud10

- Minimal-ish: Minimalism, Intentional Living, MotherhoodCloud10