LoRA Techniques for Large Language Model Adaptation: The Complete Guide for Developers and Engineers

- Af

- Forlag

- Sprog

- Engelsk

- Format

- Kategori

Fakta

"LoRA Techniques for Large Language Model Adaptation"

"LoRA Techniques for Large Language Model Adaptation" offers a comprehensive deep dive into the principles, mechanics, and practicalities of adapting large language models (LLMs) using Low-Rank Adaptation (LoRA). Beginning with an insightful overview of the evolution and scaling of LLMs, the book systematically addresses the challenges inherent in adapting foundation models, highlighting why traditional fine-tuning methods often fall short in efficiency and scalability. Drawing on real-world use cases and the burgeoning adoption of LoRA across both research and industry, it situates readers at the cutting edge of parameter-efficient fine-tuning techniques.

The work stands out for its rigorous treatment of the mathematical and engineering foundations underpinning LoRA. Through detailed explorations of low-rank matrix decomposition, formal parameter mappings, and empirical strategies for rank selection, readers gain a robust understanding of both the theoretical expressivity and practical impact of LoRA compared to other adaptation techniques. The text moves beyond the abstract, offering actionable guidance for integrating LoRA into modern transformer architectures, optimizing training for scalability and resource constraints, and leveraging composable and hybrid approaches to meet diverse adaptation goals.

Bridging theory and application, the book culminates in advanced chapters on operationalizing LoRA in real-world settings, evaluating adaptation effectiveness, and innovating for next-generation language models. It presents a rich collection of strategies for serving LoRA-augmented models in production, maintaining long-term adaptability, and meeting the needs of privacy-conscious environments. Through tutorials, case studies, and a survey of open-source tools, "LoRA Techniques for Large Language Model Adaptation" provides a definitive resource for machine learning practitioners, researchers, and engineers seeking to master the art and science of efficient large model adaptation.

© 2025 HiTeX Press (E-bog): 6610000965120

Udgivelsesdato

E-bog: 13. juli 2025

Andre kan også lide...

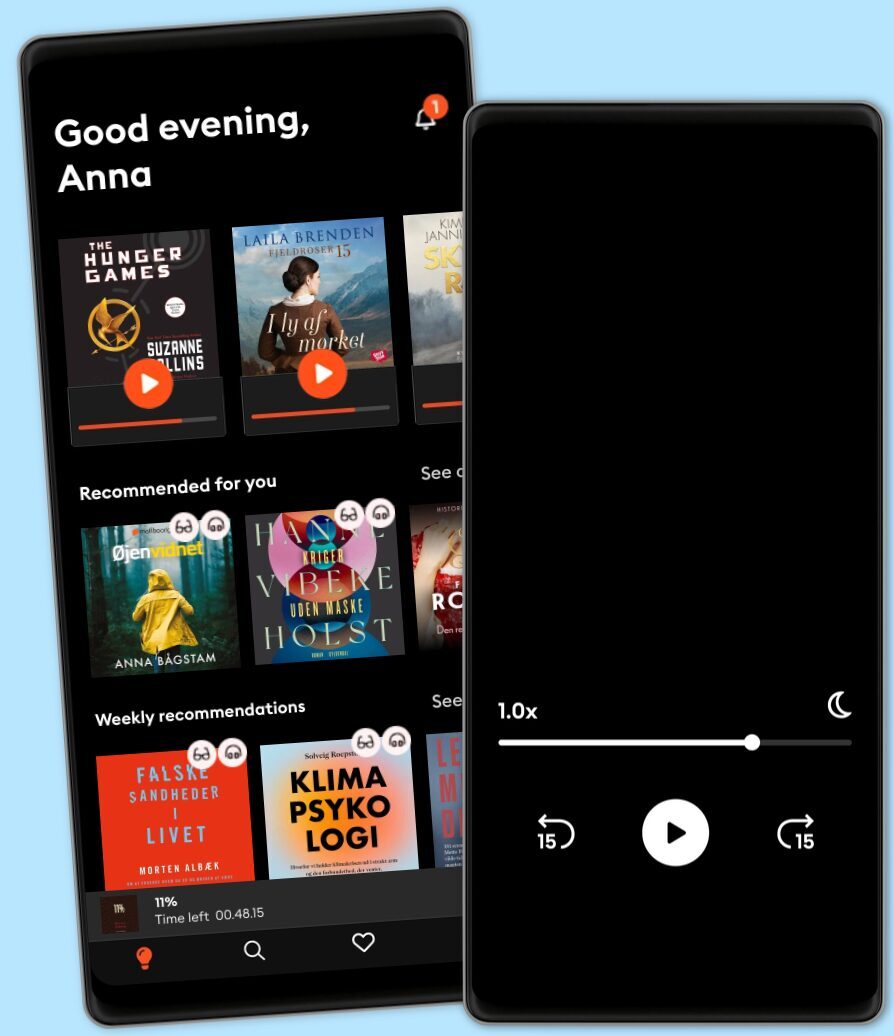

Vælg dit abonnement

Over 1 million titler

Download og nyd titler offline

Eksklusive titler + Mofibo Originals

Børnevenligt miljø (Kids Mode)

Det er nemt at opsige når som helst

Premium

For dig som lytter og læser ofte.

129 kr. /måned

Eksklusivt indhold hver uge

Fri lytning til podcasts

Ingen binding

Unlimited

For dig som lytter og læser ubegrænset.

159 kr. /måned

Eksklusivt indhold hver uge

Fri lytning til podcasts

Ingen binding

Family

For dig som ønsker at dele historier med familien.

Fra 179 kr. /måned

Fri lytning til podcasts

Kun 39 kr. pr. ekstra konto

Ingen binding

179 kr. /måned

Flex

For dig som vil prøve Mofibo.

89 kr. /måned

Gem op til 100 ubrugte timer

Eksklusivt indhold hver uge

Fri lytning til podcasts

Ingen binding

Har du en rabatkode?

Indtast koden her